Modern applications are no longer just about exposing APIs or handling requests—they are increasingly expected to reason, adapt, and interact intelligently with data and users. This shift is where Model Context Protocol (MCP) comes into play.

MCP is an emerging pattern that standardizes how applications communicate with large language models (LLMs). It acts as a bridge between application and AI systems, enabling models to operate with a deeper understanding of domain while remaining controllable and predictable. Take a look at documentation – link.

Big picture

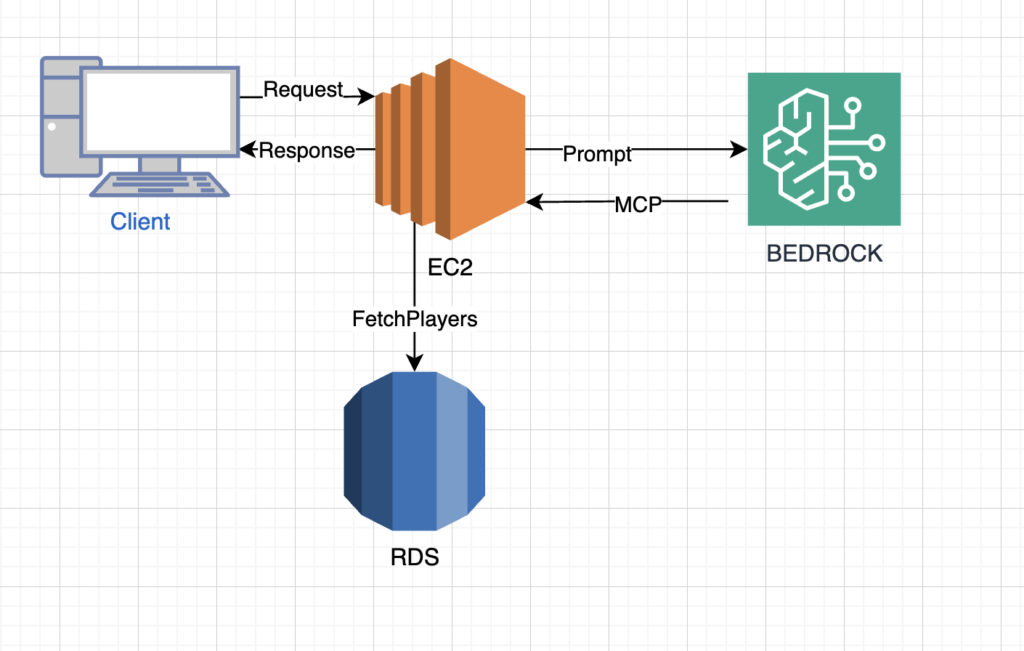

To expose domain data to an AI agent, I implemented an MCP server using Spring AI, which acts as a bridge between the model and backend services. The server defines an MCP tool responsible for fetching a list of players along with their win rates from the database. This tool is registered within the Spring AI context and exposed in a way that the AI agent (e.g., powered by a Bedrock-hosted model) can invoke it dynamically during reasoning. When the agent needs to build an optimal squad, it calls the MCP tool, receives structured player statistics, and uses that data to generate informed decisions. This setup keeps the model stateless while delegating data retrieval and business logic to the MCP layer, ensuring both flexibility and clean separation of concerns.

For the MVP, I kept the integration intentionally simple by exposing a REST controller that accepts a raw string describing the squad generation logic and passes it directly to the AI layer. Inside the service, a Spring AI ChatClient is used to construct a prompt that instructs the model to build two squads of six players based on win rate, while also embedding the provided generation logic. When the request is executed, the model can autonomously decide to call the MCP tool to fetch player data, combine it with the prompt instructions, and return the final squad composition as plain text. This lightweight approach allows rapid iteration without complex orchestration, making it ideal for validating how well the agent interacts with MCP tools before introducing stricter schemas or structured outputs.

Java server

The Java server is built as a modular Spring Boot application, leveraging Spring Boot for rapid setup and dependency management, and Spring AI to integrate seamlessly with the AI agent layer. The core of the system exposes MCP tools as REST-like endpoints that the agent can invoke, with each tool encapsulating specific business logic—such as fetching players and their win rates. Data access is handled via Spring Data JPA, which simplifies interaction with the database and abstracts query complexity. The server is packaged as a runnable JAR using Maven, making it easy to deploy on a lightweight EC2 instance. This setup keeps the architecture clean: Spring Boot manages application lifecycle, Spring AI handles communication with the model, and the MCP layer defines a clear contract between AI reasoning and backend data services.

import org.springframework.ai.tool.annotation.Tool;

import org.springframework.stereotype.Component;

import java.util.List;

@Component

public class PlayerTools {

private final PlayerRepository playerRepository;

@Tool(description = "Fetch all players with their win rates")

public List<Player> getPlayers() {

return playerRepository.findAllPlayingNextMatch();

}

}import org.springframework.ai.chat.client.ChatClient;

import org.springframework.stereotype.Service;

@Service

public class SquadService {

private final ChatClient chatClient;

private final PlayerTools playerTools;

public SquadService(ChatClient.Builder builder, PlayerTools playerTools) {

this.chatClient = builder.build();

this.playerTools = playerTools;

}

public String generateSquad(String generationLogic) {

return chatClient.prompt()

.user("Build the 2 squads of 6 players based on win rate. Generation logic:"

+ generationLogic)

.tools(playerTools)

.call()

.content();

}

}Connecting AI

To connect app running on EC2 with AI, assign the instance an IAM role that allows access to Amazon Bedrock, and invoke the model directly from Spring application using Spring AI. Bedrock provides inference APIs like InvokeModel, while Spring AI offers built-in support for Bedrock chat integrations, so you can avoid dealing with low-level AWS SDK calls and focus on application logic.

spring:

ai:

bedrock:

aws:

region: us-east-1

converse:

chat:

enabled: true

options:

model: anthropic.claude-3-5-sonnetWhen using Amazon Bedrock, the cost model is straightforward but important to understand early. You are not paying for “running AI servers” — instead, you pay per usage, mainly based on the number of tokens processed by the model (input + output). Each model available in Bedrock (e.g., Claude, Titan, etc.) has its own pricing per 1,000 tokens, so more complex prompts or larger responses directly increase cost.

Why chose this approach

This approach was chosen to decouple decision-making logic from application code and delegate it to the AI layer. By leveraging Amazon Bedrock together with Spring AI and MCP tools, squad generation becomes driven by prompts instead of hardcoded algorithms. This makes it possible to adjust the squad-building strategy simply by modifying the input logic or prompt instructions, without introducing new services, redeploying complex logic, or maintaining multiple algorithm implementations. As a result, experimentation with different selection strategies—such as prioritizing win rate, balancing teams, or applying custom constraints—can be done rapidly and with minimal engineering overhead, while the core system remains stable and reusable.

Leave a Reply